Blog Archives

AI Presidential Debate

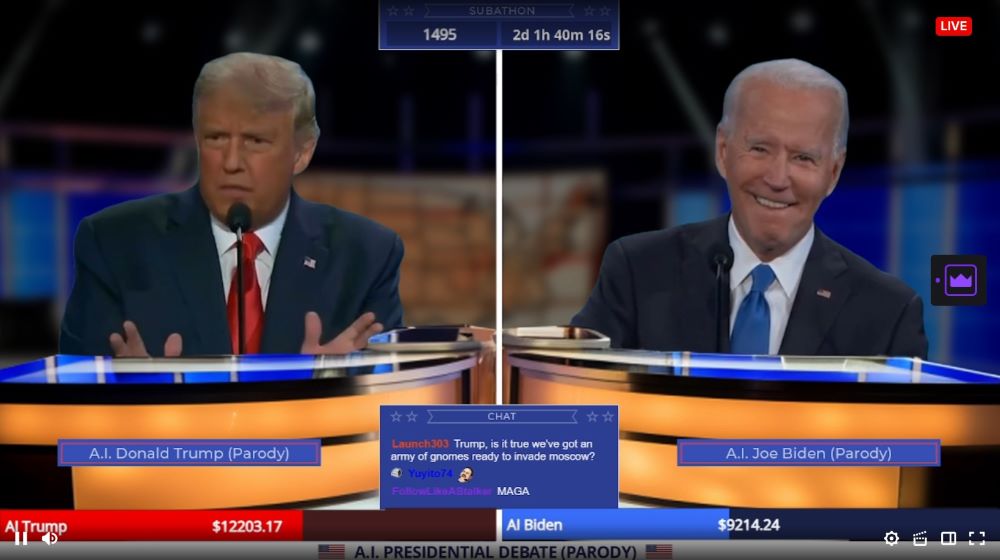

Oh boy, the future is now:

Welcome to TrumpOrBiden2024, the ultimate AI generated debate arena where AI Donald Trump and AI Joe Biden battle it out 24/7 over topics YOU suggest in the Twitch chat!

https://www.twitch.tv/trumporbiden2024

It’s best to just view a few minutes of it yourself, but this rank absurdity is an AI-driven “deep-fake” endless debate between Trump and Biden, full of NSFW profanity, and somehow splices in topics from Twitch chat. As the Kotaku article mentions:

The things the AI will actually argue about seem to have a dream logic to them. I heard Biden exclaim that Trump didn’t know anything about Pokémon, so viewers shouldn’t trust him. Trump later informed Biden that he couldn’t possibly handle genetically modified catgirls, unlike him. “Believe me, nobody knows more about hentai than me,” Trump declared. Both men are programmed to loosely follow the conversation threads the other sets, and will do all the mannerisms you’ve come to expect out of these debates, like seeing Biden react to a jab with a small chuckle. At one point during my watch, I saw the AI stop going at each other only to start tearing into people in the chat for having bad usernames and for not asking real questions.

It’s interesting how far we have come as a society and culture. At one point, deep-fakes were a major concern. Now, between ChatGPT, Midjourney/Stable Diffusion, and basic Instagram filters, there is a sort of democratization of AI taking place. Granted, most of these tools were given out for free to demonstrate the value of the groups who wish to eventually be bought up by multinationals, but the things developed in such a short time is nevertheless amazing.

Of course, this all may well be the calm before the storm. Elon Musk’s lawyers tried to argue that video of him claiming that Teslas could drive autonomously “right now” (in 2016) were in fact deep-fakes. The judge was not amused and said Elon should then testify under oath that it wasn’t him in the video. The deep-fake claim was walked back quickly. But it is just a matter of time before someone gets arrested or sentenced based on AI-fabricated evidence and when it comes out as such, things will get wild.

Or maybe it won’t. Impersonators have been around for thousands of years, people get thrown into jail on regularly-fabricated evidence all the time, and the threat of perjury is one of those in-your-face crimes that tend to keep people honest (or quiet) on the stand.

I suppose in the meantime, it’s memetime. Until the internet dies.

ChatGPT

Came across a Reddit post entitled “Professor catches student cheating with ChatGPT: ‘I feel abject terror’”. Among the comments was one saying “There is a person who needs to recalibrate their sense of terror.” The response to that was this:

Although I am bearish on the future of the internet in general with AI, the concerns above just sort of made me laugh.

When it comes to doctors and lawyers, what matters are results. Even if we assume ChatGPT somehow made someone pass the bar or get a medical license – and they further had no practical exam components/residency for some reason – the ultimate proof is the real world application. Does the lawyer win their cases? Do the patients have good health outcomes? It would certainly suck to be the first few clients that prove the professionals had no skills, but that can usually be avoided by sticking to those with a positive record to begin with.

And let’s not pretend that fresh graduates who did everything legit are always going to be good at their jobs. It’s like the old joke: what do you call the person who passed medical school with a C-? “Doctor.”

The other funny thing here is the implicit assumption that a given surgeon knowing which drug to administer is better than an AI chatbot. Sure, it’s a natural assumption to make. But surgeons, doctors, and everyone in-between are constantly lobbied (read: bribed) by drug companies to use their new products instead. How many thousands of professionals started over-prescribing OxyContin after attending “all expenses paid” Purdue-funded conferences? Do you know which conferences your doctor has attended recently? Do they even attend conferences? Maybe they already use AI, eh?

Having said that, I’m not super-optimistic about ChatGPT in general. A lot of these machine-learning algorithms get their base data from publicly-available sources. Once a few of the nonsense AI get loosed in a Dead Internet scenario, there is going to be a rather sudden Ouroboros situation where ChatGPT consumes anti-ChatGPT nonsense in an infinite loop. Maybe the programmers can whitelist a few select, trustworthy sources, but that limits the scope of what ChatGPT would be able to communicate. And even in the best case scenario, doesn’t that mean tight, private control over the only unsullied datasets?

Which, if you are catering to just a few, federated groups of people anyway, maybe that is all you need.

Dead Internet

There are two ways to destroy something: make it unusable, or reduce its utility to zero. The latter may be happening with the internet.

Let’s back up. I was browsing a Reddit Ask Me Anything (AMA) thread by a researcher who worked on creating “AI invisibility cloak” sweaters. The goal was to design “adversarial patterns” that essentially tricked AI-based cameras from no longer recognizing that a person was, in fact, a person. During the AMA though, they were asked what they thought about language-model AI like GPT-3. The reply was:

I have a few major concerns about large language models.

– Language models could be used to flood the web with social media content to promote fake news. For example, they could be used to generate millions of unique twitter or reddit responses from sockpuppet accounts to promote a conspiracy theory or manipulate an election. In this respect, I think language models are far more dangerous than image-based deep fakes.

This struck me as interesting, as I would have assumed deep-faked celebrity endorsements – or even straight-up criminal framing – would have been a bigger issue for society. But… I think they are right.

There is a conspiracy theory floating around for a number of years called “The Dead Internet Theory.” This Atlantic article explains in more detail, but the premise is that the internet “died” in 2016-2017 and almost all content since then has been generated by AI and propagated by bots. That is clearly absurd… mostly. First, I feel like articles written by AI today are pretty recognizable as being “off,” let alone what the quality would have been five years ago.

Second, in a moment of supreme irony, we’re already pretty inundated with vacuous articles written by human beings trying to trick algorithms, to the detriment of human readers. It’s called “Search Engine Optimization” and it’s everywhere. Ever wonder why cooking recipes on the internet have paragraphs of banal family history before giving you the steps? SEO. Are you annoyed when a piece of video game news that could have been summed up with two sentences takes three paragraphs to get to the point? SEO. Things have gotten so bad though that you pretty much have to engage in SEO defensively these days, lest you get buried on Page 27 of the search results.

And all of this is (presumably) before AI has gotten involved belting out 10,000 articles a second.

A lot has already been said about polarization in US politics and misinformation in general, but I do feel like the dilution of utility of the internet has played a part in that. People have their own confirmation biases, yes, but it also true that when there is so much nonsense everywhere, that you retreat to the familiar. Can you trust this news outlet? Can you trust this expert citing that study? After a while, it simply becomes too much to research and you end up choosing 1-2 sources that you thereafter defer to. Bam. Polarization. Well, that and certain topics – such as whether you should force a 10-year old girl to give birth – afford no ready compromises.

In any case, I do see there being a potential nightmare scenario of a Cyberpunk-esque warring AI duel between ones engaging in auto-SEO and others desperately trying to filter out the millions of posts/articles/tweets crafted to capture the attention of whatever human observers are left braving the madness between the pockets of “trusted” information. I would like to imagine saner heads would prevail before unleashing such AI, but… well… *gestures at everything in general.*

This AI Ain’t It

Sep 11

Posted by Azuriel

Wilhelm wrote a post called “The Folly of Believing in AI” and is otherwise predicting an eventual market crash based on the insane capital spent chasing that dragon. The thesis is simple: AI is expensive, so… who is going to pay for it? Well, expensive and garbage, which is the worst possible combination. And I pretty much agree with him entirely – when the music stops, there will be many a child left without a chair but holding a lot of bags, to mix metaphors.

The one problematic angle I want to stress the most though, is the fundamental limitation of AI: it is dependent upon the data it intends to replace, and yet that data evolves all the time.

Duh, right? Just think about it a bit more though. The best use-case I have heard for AI has been from programmers stating that they can get code snippets from ChatGPT that either work out of the box, or otherwise get them 90% of the way there. Where did ChatGPT “learn” code though? From scraping GitHub and similar repositories for human-made code. Which sounds an awful like what a search engine could also do, but nevermind. Even in the extremely optimistic scenario in which no programmer loses their jobs to future Prompt Engineers, eventually GitHub is going to start (or continue?) to accumulate AI-derived code. Which will be scraped and reconsumed into the dataset, increasing the error rate, thereby lowering the value that the AI had in the first place.

Alternatively, let’s suppose there isn’t an issue with recycled datasets and error rates. There will be a lower need for programmers, which means less opportunity for novel code and/or new languages, as it would have to compete with much cheaper, “solved” solution. We then get locked into existing code at current levels of function unless some hobbyists stumble upon the next best thing.

The other use-cases for AI are bad in more obvious, albeit understandable ways. AI can write tailored cover letters for you, or if you’re feeling extra frisky, apply for hundreds of job postings a day on your behalf. Of course, HR departments around the world fired the first shots of that war when they started using algorithms to pre-screen applications, so this bit of turnabout feels like fair play. But what is the end result? AI talking to AI? No person can or will manually sort through 250 applications per job opening. Maybe the most “fair” solution will just be picking people randomly. Or consolidating all the power into recruitment agencies. Or, you know, just nepotism and networking per usual.

Then you get to the AI-written house listings, product descriptions, user reviews, or office emails. Just look at this recent Forbes article on how to use ChatGPT to save you time in an office scenario:

The article states email and meetings represent 15% and 23% of work time, respectively. Sounds accurate enough. And yet rather than address the glaring, systemic issue of unnecessary communication directly, we are to use AI to just… sort of brute force our way through it. Does it not occur to anyone that the emails you are getting AI to summarize are possibly created by AI prompts from the sender? Your supervisor is going to get AI to summarize the AI article you submitted, have AI create an agenda for a meeting they call you in for, AI is going to transcribe the meeting, which will then be emailed to their supervisor and summarized again by AI. You’ll probably still be in trouble, but no worries, just submit 5000 job applications over your lunch break.

In Cyberpunk 2077 lore, a virus infected and destroyed 78.2% of the internet. In the real world, 90% of the internet will be synthetically generated by 2026. How’s that for a bearish case for AI?

Now, I am not a total Luddite. There are a number of applications for which AI is very welcome. Detecting lung cancer from a blood test, rapidly sifting through thousands of CT scans looking for patterns, potentially using AI to create novel molecules and designer drugs while simulating their efficacy, and so on. Those are useful applications of technology to further science.

That’s not what is getting peddled on the street these days though. And maybe that is not even the point. There is a cynical part of me that questions why these programs were dropped on the public like a free hit from the local drug dealer. There is some money exchanging hands, sure, and it’s certainly been a boon for Nvidia and other companies selling shovels during a gold rush. But OpenAI is set to take a $5 billion loss this year alone, and they aren’t the only game in town. Why spend $700,000/day running ChatGPT like a loss leader, when there doesn’t appear to be anything profitable being led to?

[Fake Edit] Totally unrelated last week news: Microsoft, Apple, and Nvidia are apparently bailing out OpenAI in another round of fundraising to keep them solvent… for another year, or whatever.

I think maybe the Dead Internet endgame is the point. The collateral damage is win-win for these AI companies. Either they succeed with the AGI moonshot – the holy grail of AI that would change the game, just like working fusion power – or fill the open internet with enough AI garbage to permanently prevent any future competition. What could a brand new AI company even train off of these days? Assuming “clean” output isn’t now locked down with licensing contracts, their new model would be facing off with ChatGPT v8.5 or whatever. The only reasonable avenue for future AI companies would be to license the existing datasets themselves into perpetuity. Rent-seeking at its finest.

I could be wrong. Perhaps all these LLMs will suddenly solve all our problems, and not just be tools of harassment and disinformation. Considering the big phone players are making deepfake software on phones standard this year, I suppose we’ll all find out pretty damn quick.

My prediction: mo’ AI, mo’ problems.

Posted in Commentary, Philosophy

2 Comments

Tags: AI, ChatGPT, Dead Internet Theory, Large Language Models, Luddite, What Could Possibly Go Wrong?