Category Archives: Philosophy

Past is Prologue

Starfield has been a wild success. Like, objectively: it was the best-selling game in September and has since become the 7th best-selling game for the year. And those stats are based on actual sale figures, unmuddied by Xbox Game Pass numbers. Which is astounding to think about.

[Fake Edit] A success… except in the Game of the Year department. Yikes. It’s at least nominated for Best RPG, along with (checks notes) Lies of P? No more sunlight between RPG-elements and RPG anymore, I guess. Doesn’t matter though, Baldur’s Gate 3 is going to continue drinking that milkshake.

Starfield having so many procedurally-generated planets though, is still a mistake. And its a mistake that Mass Effect: Andromeda took on the chin for all of gamedom a decade ago.

Remember Andromeda? The eagerly-anticipated BioWare follow-up to their cultural phenomenon trilogy? It ended up being a commercial flop, best remembered for terrible facial animations and effectively killing the golden goose. What happened? Procedurally-generated planets. Andromeda didn’t have them, but the (multiple) directors wanted them so badly that they wasted months and years fruitlessly chasing them until there was basically just 18 months left to pump out a game.

You can read the Jason Schreier retrospective (from 2017) for the rest of the story. And in total fairness, the majority of the production issues stemmed from EA forcing BioWare to use the Frostbite engine to create their game. But it is a fact that they spent a lot of time chasing the exploration “dream.”

Another of Lehiany’s ideas was that there should be hundreds of explorable planets. BioWare would use algorithms to procedurally generate each world in the game, allowing for near-infinite possibilities, No Man’s Sky style. (No Man’s Sky had not yet been announced—BioWare came up with this concept separately.)

[…] It was an ambitious idea that excited many people on the Mass Effect: Andromeda team. “The concept sounds awesome,” said a person who worked on the game. “No Man’s Sky with BioWare graphics and story, that sounds amazing.”

That’s how it begins. Granted, we wouldn’t see how No Man’s Sky shook out gameplay wise until 2016.

The irony though, is that BioWare started to see it themselves:

The Mass Effect: Andromeda team was also having trouble executing the ideas they’d found so exciting just a year ago. Combat was shaping up nicely, as were the prototypes BioWare had developed for the Nomad ground vehicle, which already felt way better to drive than Mass Effect 1’s crusty old Mako. But spaceflight and procedurally generated planets were causing some problems. “They were creating planets and they were able to drive around it, and the mechanics of it were there,” said a person who worked on the game. “I think what they were struggling with was that it was never fun. They were never able to do it in a way that’s compelling, where like, ‘OK, now imagine doing this a hundred more times or a thousand more times.’”

And there it is: “it was never fun.” It never is.

I have logged 137 hours in No Man’s Sky, so perhaps it is unfair of me to suggest procedural exploration is never fun. But I would argue that the compelling bits of games like NMS are not the exploration elements – it’s stuff like resource-gathering. Or in games like Starbound, it’s finding a good skybox for your base. No one is walking around these planets wondering what’s over the next hill in the same way one does in Skyrim or Fallout. We know what’s over there: nothing. Or rather, one of six Points of Interest seeded by an algorithm to always be within 2km walking distance of where you land.

Exploring a procedurally generated world is like reading a novel authored by ChatGPT. Yeah, there are words on a page in the correct order, but what’s the point?

Getting back to Starfield though, the arc of its design followed almost the reverse of Andromeda. In this sprawling interview with Bruce Nesmith (lead designer of Skyrim and Bethesda veteran), he talked about how the original scope was limited to the Settled Systems. But then Todd Howard basically pulled “100 star systems” out of thin air and they went with it. And I get it. If you are already committed to using procedural generation on 12 star systems, what’s another 88? A clear waste of time, obviously.

And that’s not just an idle thought. According to this article, as of the end of October, just over 3% of Xbox players have the “Boots on the Ground” achievement that you receive for landing on 100 planets. Just thinking about how many loading screens that would take exhausts me. Undoubtedly, that percentage will creep up over time, but at some point you have to ask yourself what’s the cost. Near-zero if you already have the procedural generation engine tuned, of course. But taking that design path itself excludes things like environmental storytelling and a richer, more tailored gaming experience.

Perhaps the biggest casualty is one more felt than seen: ludonarrative. I talked about this before with Starfield, but one of the main premises of the game is exploring the great unknown. Except everything is already known. To my knowledge, there is not a single planet on any system which doesn’t have Abandoned Mines or some other randomly-placed human settlement somewhere on it. So what are we “exploring” exactly? And why would anyone describe this game as “NASApunk” when it is populated with millions of pirates literally everywhere? Of course, pirates are there so you don’t get too bored exploring the boring the planets, which are only boring because they exist.

Like I said at the top, Starfield has been wildly successful in spite of its procedural nonsense. But I do sincerely hope that, at some point, these AAA studios known for storytelling and/or exploration stop trying to make procedural generation work and just stay in their goddamn lane. Who out here is going “I really liked Baldur’s Gate 3, so I hope Larian’s next game is a roguelike card-battler”? Whatever, I guess Todd Howard gets a pass to make his “dream game” after 25 years. But as we sleepwalk into the AI era, I think it behooves these designers to focus on the things that they are supposedly better at (for now).

We learn from our mistakes eventually, right? Right?

Incentivizing Morality

In the comments of my last post, Kring had this to say:

In an RPG I don’t think there should be a game mechanic rewarding “the correct way” to play it. The question isn’t why aren’t we all murder-hoboing through a game where you can be everything. The question is, if you can be anything, why would you choose to be a murder-hobo?

In the vast majority of games, “the good path” is incentivized by default. This usually manifests in terms of a game’s ending, which sees the hero and his/her scrappy teammates surviving and defeating the antagonist when enough altruistic flags are raised. Conversely, being selfish and/or evil typically results in a bad ending where the hero possibly dies, or becomes just as corrupt as the original antagonist, and most of the party members have abandoned you (or been killed). It’s almost a tautology that way – the good path is good, the bad path is bad.

Game designers usually layer on addition incentives for moral play though. The classical trope is when the hero saves the poor village and then refuses to accept the reward… only to be given a greater reward later (or sometimes immediately). I have often imagined a hypothetical game in which the good path is not only unrewarding, but actively punished. How betrayed do you think players would feel if doing good deeds resulted in the bad guy winning and all your efforts come to naught? It would probably be as unsatisfying in such a game as it is IRL.

Incentives are powerful things that guide player behavior. And sometimes these incentives can go awry.

Bioshock is an example of almost archetypal game morality. As you progress through the game, you are given the choice of rescuing Little Sisters or harvesting them to consume their power. While that may seem like an active tradeoff, the reality is that you end up getting goodies after rescuing three Little Sisters, putting you about on par with where you would have been had you harvested them. By the end of the game, the difference in total power (ADAM resource) is literally about 8%. Meanwhile, if you harvest even one (or 2?) Little Sister, you are locked into the bad ending.

An example of contrary incentives comes from Deus Ex: Human Revolution. In this one, you are given the freedom of choosing several different ways to overcome challenges. For example, you can run in guns blazing, sneak through ventilation shafts, and/or hack computers. The problem is the “and/or.” When you perform a non-lethal takedown, for example, you get some XP. You also get XP for straight-up killing enemies. But what if you kill someone you already rendered unconscious with a non-lethal takedown? Believe it or not, extra XP. Even worse, the hacking minigame allows you to earn XP and resources whereas acquiring the password to unlock the device gives nothing. The end result is that the player is incentivized to knock out enemies, then kill them, search everywhere for loot but ignore passwords/keys so you can hack things instead, and otherwise be the most schizophrenic spy ever.

Does DE:HR force you to play that way? Not directly. Indeed, it has a Pacifist achievement as a reward for sticking just to non-lethal takedowns. But forgoing the extra XP means you have less gameplay options for infiltrating enemy bases for a longer amount of time, which can result in you pigeonholing yourself into a less fun experience. How else could you discover the joy that is throwing vending machines around with your bare augmented hands without having a few spare upgrades?

Speaking of less fun experiences, consider Dishonored. This is another freeform stealth game where you are given special powers and let loose to accomplish your objective as you choose. However, if you so happen to choose lethal takedowns too many times, the environment becomes infested with more hostile vermin and you end up with the bad ending. I don’t necessarily have an issue with the enforced morality system, but limiting oneself to non-lethal takedowns means the majority of weapons (and some abilities) in the game are straight-up useless. This leads you to tackle missions in the exact same way every time, with no hope of getting any more interesting abilities, tools, or even situations.

I bring all this up to answer Kring’s original question: why choose to be the murder hobo in Starfield? Because that’s what the game designers incentivized, unintentionally or not. Bethesda crafted a gameplay loop that:

- Makes stealth functionally impossible

- Makes non-lethal attacks functionally impossible

- Radically inflates the cost of ammo

- Severely limits inventory space

- Gates basic character functions behind the leveling system

- Has Persuasion system ran by hidden dice rolls

- Feature no lasting consequences of note

Does this mean you have to steal neutral NPCs’ spaceships right from under them and pawn it lightyears away? Or pickpocket every named NPC you encounter? No, you don’t. Indeed, some people would suggest that playing that way is “optimizing the fun out of the game.”

But here’s the thing: you will end up feeling punished for most of the game, because of the designer-based incentives not aligning with your playstyle. In every combat encounter – which will be the primary source of all credits and XP in the game regardless of how you play1 – you will be acutely aware of how little ammo you have left, switching to guns that you don’t like and also take longer to kill enemies with, being stuck with smaller spaceships that perform worse in the frequent space battles, and don’t offer quality of life features you will enjoy having. Sinking points into the Persuasion system will make those infrequent opportunities more successful, but those very same points mean you have less combat or economic bonuses which, again, will leave you miserable in the rest of the game.

Can you play any way you want in Starfield in spite of that? Sure. Well… not as a pacifist. Or someone who sneaks past enemies. Or talks their way out of every combat encounter. But yes, you can avoid being a total murder hobo. You can also turn down the graphical settings to their lowest level and change the resolution to 800×600 to roleplay someone with vision problems. Totally possible.

My point is that gameplay incentives matter. Game designers don’t need to create strict moral imperatives – in fact, I would prefer they didn’t considering how Dishonored felt to play – but they should take care to avoid unnecessary friction. Imagine if Deus Ex: Human Revolution did not award extra XP for killing unconscious NPCs, and using found passwords automatically gave you all the bonus XP/resources that the hacking game offers. Would the game get worse or more prescriptive? No! If anything, it expands the roleplaying opportunities because you are no longer fighting the dissonance the system inadvertently (or sloppily) creates.

In Starfield’s case, I’m a murder hobo because the game doesn’t feel good to play any other way. But at the root of that feeling, there lies a stupidly simple solution:

- Let players craft ammo.

That’s it. Problem solved – I’d hang up my bloody hobo hat tomorrow.

Right now the outpost system is a completely pointless, tacked-on feature. If you could craft your own ammo though, suddenly everyone wants a good outpost setup, which means players are flying around and exploring planets to find these resources. Once players have secured a source of ammo, credits become less critical. This removes the incentives for looting every single gun from every single dead pirate, which means less time spent fighting the awful inventory and UI. With that, being a murder hobo is more of a lifestyle choice rather than a dissonance you have to constantly struggle against.

That’s a lot of words to essentially land on leveraging the Invisible Hand to guide player behavior. And I know that there will be those that argue that incentives are irrelevant or unnecessary, because players always have the choice to play a certain way even if it is “suboptimal.” But I would say to you: why play that game? Unless you are specifically a masochist, there are much better games to roleplay as the good guys in than Starfield. You can do it, and there are good guy choices to make, but even Bethesda’s other games are infinitely better. And that’s sad. Let’s hope that they (or mods, as always) turn it around.

- I have read some blogs that suggest you can utilize the outpost system to essentially farm resources, turn them into goods to vendor, which nets both credits and crafting XP. So, yeah, technically you don’t have to rely on combat encounters for credits. However, you can’t progress through the story this way, and I’m not sure that using outposts in this fashion is all that functionally different from simply stealing everything. ↩︎

Murder Hobo

In Starfield, I roleplay exclusively as a murder hobo. Oddly enough, Wikipedia has an entry on that:

murderhobo (plural murderhobos or murderhoboes)

- (roleplaying games, derogatory or humorous) A character who wanders the gameworld, unattached to any community, indiscriminately killing and looting.

I was reflecting on this the other day. I found myself on a planet and inexplicably, irrationally, exploring. Everything is procedurally generated, there is zero environmental storytelling, and the payout for fully scanning a planet is not even remotely worth your time. But… I do it occasionally. So there I was, walking towards a Point of Interest, and then a ship landed nearby. These are technically a random encounter, but the ships often leave the area stupidly quickly, making you wonder why Bethesda bothered programming them in.

So, I zip over there fast as I can, expecting some pirate action. Instead, they were neutral NPCs. I talk to them, they say they are low on supplies, and ask for some water. I give it to them. Then I check out their ship. Lockpick my way past the hatch, empty their cargo hold of cash and valuables, and then… look at the captain chair. Then I sit in the captain chair and blast off into space. A few menu screens later, I land the ship in New Atlantis, register it, sell it, and then fast travel back to the planet I was exploring originally.

In the abstract, I think this sequence was literally the most psychopathic thing I have ever done in a (non-Rimworld) videogame. This small group of people landed on an uncharted planet, desperate for supplies. A random passerby graciously gave them water. Then, moments later, they had to watch as their only means of survival is stolen out from under them. They are literally stranded on a desolate planet with no breathable atmosphere, no shelter, no hope.

Also, no consequences.

Now, obviously that is the problem here. I do not usually murder hobo my way through Baldur’s Gate 3, or Cyberpunk 2077, or Mass Effect, or really most other games. I was thinking about that though: why don’t I? What is enough of a consequence to augment my behavior? Some kind of automatic karma penalty like in Fallout? That often led to some arguably more murder hobo-ish behavior insofar that stealing from “bad guy” was apparently worse than just killing him and taking the now-ownerless items. Companion dissatisfaction? That can certainly be annoying, especially when you want to be a bit more “Renegade” in your dialog choices. Often though, this can be gamed by simply selecting different companions, doing what you wanted to do, and then swapping them back in once you’re done.

Honestly, I think it comes down to the possibility of accountability. I do not know every permutation to your choices in Baldur’s Gate 3, but I have read enough posts and interviews to know that characters you interact with in Act 1 may or may not show up in Act 2 and Act 3 based on your actions. That leads one to a different posture when it comes to negotiations; the more people survive, the more possible quest givers exist for the late-game. This requires a certain level of detail though, which is not always possible in a more sandbox-lite environment.

One method I would like to see though, is almost a metagame appeal to empathy. Every named NPC in Starfield carries 800-1200 credits, which is kind of a lot for how easy it is to pickpocket them. If you lose the roll, you get caught, and have to Quick Load your way out of consequence. But if you succeed… nothing happens other than credits in your pocket. What if NPCs had dialog lamenting their loss of credits? About how they won’t be able to make rent payments? What if they asked other NPCs (or even you) for help looking for a lost Credstick or whatever? What if that group of now-stranded civilians put out a mayday asking for rescue? Or really just a personal appeal to return their ship?

Sometimes being a murder hobo is its own reward, but often I think it is just a natural consequence of game incentives/lack of disincentives combined with a failure of immersion. If NPCs don’t matter, it doesn’t matter what happens to them. You can make them matter using elaborate penalty systems or story hooks, or make them matter by making them “real” enough to care about.

Or maybe this is all just me, and I have a bit of the Dark Urge IRL.

Procedural Dilemma

One of the promises of procedural generation in gaming is that each experience will be unique, because it was randomly generated. The irony is that the opposite is almost always the case, as designers seem to lack the courage to commit. Or perhaps they recognize that true randomness makes for bad gameplay experiences and thus put in guardrails that render the “procedural” bits moot.

Both Starfield and No Man’s Sky feature procedurally generated planets with randomized terrain, resources, flora, and fauna. Both games allow you to land anywhere on a given planet. But neither1 game allows there to be nothing on it. There are desolate moons with no atmosphere, yes, but in both games there will be some Point of Interest (PoI) within 2 km of your landing location in any direction. Sometimes several. And the real kicker is that there are always more PoIs everywhere you look.

There is not one inch of the universe in these games that doesn’t already have monuments or outposts on it, and the ludonarrative dissonance of that fact is never resolved.

The dilemma is that true procedural generation probably leads to even worse outcomes.

Imagine that the next eight planets you land on have zero PoIs. No quest markers, no resources of note, no outposts, no nothing. How interested are you in landing on a ninth planet? Okay, but imagine you can use a scanner from orbit to determine there are no PoIs or whatever. So… the first eight planet scans come up with nothing, are you scanning the ninth planet? At some point players are going to want some indication of where the gameplay is located, so they know where to point their ship. Fine, scanners indicate one planet in this system has two “anomalies.” Great, let’s go check it out.

But hold up… what was the point of procedural generation in that scenario? There isn’t much of a practical difference between hand-crafted planets and procedurally-generated-as-interesting planets surrounded of hundreds of lifeless ones. Well, other than the fact that those random PoIs in the latter case better be damn interesting lest players get bored and bounce off your game due to bad RNG.

Minecraft comes up as an example of procedural generation done right, and I largely agree. However, it is “one world” and you are not expected to hop from one map to the next. The closest space game to resolve the dilemma for me has been Starbound + Frackin’ Universe mod – some planets had “dungeon” PoIs and/or NPCs and many did not. Each star system has at least one space station though, so it’s not completely random, but it’s very possible to, for example, land on a bunch of Eden planets or whatever and not find an exact configuration that you want for a base.

As I mentioned more than ten years ago (!!), procedural generation is the solution to exactly one problem: metagaming. If you don’t want a Youtube video detailing how to “get OP within the first 10 minutes of playing” your game, you need to randomize stuff. But a decade later, I think game designers have yet to fully complete the horseshoe of leaning all the way into procedural generation until you come right back around to hopping from a few hand-crafted planets and ignoring the vast reaches of uninteresting space.

- NMS may have actually introduced truly lifeless planets with no POIs in one of its updates. They are not especially common, however, as one would otherwise expect in a galaxy. ↩︎

The Nature of Art

The following picture recently won 1st place at the Colorado State Fair:

Don’t know about you, but that looks extremely cool. I could totally see picking up a print of that on canvas and hanging it on my wall, if I were still in charge of decorating my house. Reminds me a bit of the splash screens for Guild Wars 2, which I have always enjoyed.

By the way, that picture was actually generated by an AI called Midjourney.

Obviously people are pissed. Part of that is based on the seeming subterfuge of someone submitting AI-generated artwork as their own. Part is based on the broader existential question that arises from computers beating humans at creative tasks (on top of Chess). Another part is probably because the dude who submitted the work sounds like a huge douchebag:

“How interesting is it to see how all these people on Twitter who are against AI generated art are the first ones to throw the human under the bus by discrediting the human element! Does this seem hypocritical to you guys?” […]

“I’m not stopping now” […] “This win has only emboldened my mission.”

It is true that there will probably just be an “AI-generated” category in the future and that will be that.

What fascinates me about the Reddit thread though, is how a lot of the comments are saying that the picture is “obviously” AI-generated, that it looks shitty, that it lacks meaning. For example:

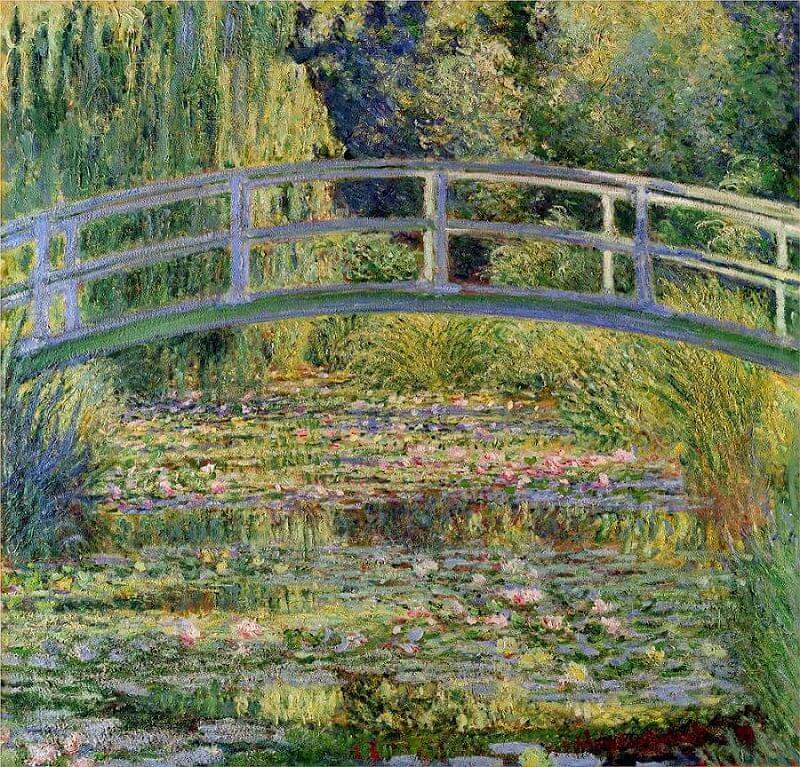

It reminds me of an article I read about counterfeit art years ago. Most of the value of a piece of artwork is tied up into its history and continuity – a Monet is valuable because it came from Monet’s hand across the ages to your home. Which is understandable from a monetary perspective. But if you just like a Monet piece because of the way it makes you feel when looking at it, the authenticity does not matter. After all, most of us have probably only seen reproductions or JPEGs of his works anyway.

At a certain point though, I have to ask the deeper question… what is a “Monet” exactly?

Monet is rather famous, of course, and his style is distinctive. But aside from a few questions on my high school Art exam decades ago, I do not know anything about his life, his struggles, his aspirations. Did he die in poverty? Did he retire early in wealth? Obviously I can Google this shit at any time, but my point is this: I like The Water Lily Pond. The way it looks, the softness of the scene, the way it sort of pulls you into a season of growth you can practically smell. Who painted it and why couldn’t matter less to me, other than possibly wanting to know where I could find similar works of this quality.

This may just say more about me than it does art in general.

I have long held the position that I do not have favorite bands, I have favorite songs. I have favorite games, not studios or directors. I have favorite movies, not actors. Some of that is probably a defense mechanism – there are many an artist who turn out to be raging assholes, game companies that “betray” your “trust,” and so on. If part of the appeal of a given work is wrapped up in the creator(s), then a fall from grace and the resultant dissonance is a doubled injury. Kevin Spacey is not going to ruin my memories of American Beauty or The Usual Suspects, for example. I may have a jaundiced eye towards anything new, or perhaps towards House of Cards if I ever got around to watching that, as some things cannot be unlearned or fully compartmentalized (or should be).

So in a way, I for one welcome our new AI-art overlords.

Unlike the esteemed Snoo-4878, I do not presume that any given human artist actually adds emotion or intention into their art, or whether its presence enhances the experience at all. How would you even know they were “adding emotion?” I once won a poetry contest back in high school with something I whipped up in 30 minutes, submitted solely for extra credit in English class. Seriously, my main goal was that the first letter of each line spelled out “Humans, who are we?” Granted, I am an exceptionally gifted writer. Humble, too. But from that experience I kind of learned that the things that should matter… don’t. Second place was this brilliant emo chick who basically wrote poetry full-time. Her submission was clearly full of intention and personal emotion and it basically didn’t matter. Why would it? Art is largely about what the audience feels. And if those small-town librarians felt more emotions when hit by big words I chose because they sounded cool, that’s what matters.

Also, it’s low-key possible the emo chick annoyed the librarians on a daily basis, Vogon-style, and so they picked the first thing out of the pile that could conceivably have “won” instead of hers.

In any case, there are limits and reductionist absurdities to my pragmatism. I do not believe Candy Crush Saga is a better game than Xenogears, just because the former made billions of dollars and the latter did not. And if the value of something is solely based on how it makes you feel, then art should probably just be replaced by wires in our head (in the future) or microdoses of fentanyl (right now).

But I am also not going to pretend that typing “hubris of man monolith stars” and getting this:

…isn’t impressive as fuck. Not quite Monet, but it’s both disturbing and inspiring, simultaneously.

Which was precisely what I was going for when I made it.

Borrowed Power, Borrowed Time

The Blizzard devs have been on a bit of a interview circuit since the reveal of the next WoW expansion. Some of the tidbits have been interesting, like this particular summary (emphasis mine):

- Borrowed Power

- The team reflected on the borrowed power systems of the past few expansions and admit that giving players power and then taking it away at the end didn’t feel good.

- As they thought of a way to move forward without borrowed power systems, they realized that the only talent system used to fill those gaps by giving you something new every expansion that would not be taken away at the end.

- The goal of the new talent system is to grow on it in further expansions with more layers and rows.

- They want the new talent system to be sustainable for at least a few expansions and what to do at that point is an issue to solve then.

In other words, Blizzard recognized the failings of the “borrowed power” system – after three expansions! – and decided to bring back talent trees as a replacement. All while acknowledging the reasons why talent trees failed in the first place… and simply saying the equivalent of “we’ll jump off that bridge when we come to it.”

You know, I’m actually going to transcript that part from Ion Hazzikostas for posterity:

And I think we’ve built this system… you know, I mean, could we sustain that for 20 years? Probably not. But we don’t realistically… we think of, you know, there’s a – there a horizon of sorts where you want to make sure this will work for two or three expansions and then beyond that it’s sort of a future us problem. Where so much will have changed between now and then we can’t… it’s not really responsible for us to like, you know, make plant firm stakes in the ground. And if we’re compromising the excitement of our designs because of we’re not sure how they’re going to scale eight years from now… we’re doing a disservice to players today and eight years from now won’t matter if we’re not making an amazing game for players today.

I don’t technically disagree. When you have a MMORPG with character progression and abilities that accumulate over time… at some point it becomes very unwieldy to maintain every system introduced. Not impossible, just unwieldy. It reminds me of when CCGs like Hearthstone or Magic: the Gathering start segmenting older card sets away from “Standard” and into “Legacy” sets. Want to play with the most broken cards from every set ever released? Sure, go have fun over there in that box. Everyone else can have fun with a smaller set of more (potentially) balanced cards over here.

Having said that… is it really an insurmountable design problem?

My first instinct was to look at Guild Wars 2, which recently released its third expansion. The game is a bit of an outlier from the get-go considering that there is no gear progression at the level cap – if you have Ascended/Legendary Berserker gear from 10+ years ago, it is still Best-in-Slot today (assuming your class/spec wasn’t nerfed). That horizontal progression philosophy bleeds over into character skills and talent-equivalents too: whatever spec you are playing, you are limited to 5 combat skills based on your weapon(s) and 5 utility skills picked from a list. You pick three talent trees, but those trees don’t “expand” or get additional nodes. The only power accumulation in GW2 is in the Mastery system… which is largely borrowed-power-esque, now that I think about it.

So GW2 is doing well in the ability/feature creep department. For now. Because that’s the rub: ArenaNet is on expansion #3. WoW is on expansion #9. Are we prepared for six more Elite Specs per class? Outside of it being a balance nightmare – which is hardly ever ArenaNet’s apparent concern – I could easily see more Elite Specs being slapped onto the UI and nothing else of note changing. So the problem is “solved” by never granting meaningfully new abilities to older specs.

And… that’s basically the extent of my knowledge of non-WoW MMOs. Surely EverQuest 1 & 2 have encountered this same issue, for example. What did they do? I think FF14 is accumulating character abilities but not yet hitting the limit of reasonableness. EVE is EVE. What else is out there that has been around long enough to run into this? Runescape?

Regardless, it’s an interesting conundrum whereby the choices appear to be A) not grant new abilities with each expansion, B) have Borrowed Power systems, or C) periodically “reset” and prune character abilities before reintroducing them.

Inflation

Amidst all the gaming sales this holiday season was a surprise. A most unwelcome one.

First was the surprise that the PC version of the Final Fantasy 7 Remake (FF7R) even came out. I was so giddy when the original news came out in 2015, but that giddiness has been tempered by years of self-restraint from not purchasing a PS4 to play just that game, and the constant endeavor to avoid spoilers. Somehow that avoidance must have led me to disregard news articles that the PC version was coming out. The fact that FF7R is an Epic exclusive also didn’t even register. But that’s because…

Secondly, seventy what-the-fuck dollars?!

I understand that FF7R is by no means the first to try to raise the hitherto $60 price ceiling of games. Many games of this new console generation are trying the same, including major franchises. It does seem a little weird that the PC port of a game that came out 1.5 years ago is trying to sell at a premium price though. Especially since one could purchase the PS5 version of the same PC bundle (main game + DLC) for $39.19 straight from the Playstation Store. That’s the winter sale price, of course, but there are cheaper options at GameStop and presumably other retailers.

I also understand that gaming companies have technically been raising prices this whole time via DLC and microtransactions and battle passes and deluxe editions and so on and so forth. Some have made the argument that it is because of the $60 price ceiling that game companies have employed black hat econ-psychologists to invent ever more pernicious means of eroding consumer surplus. That argument is, of course, ridiculous: they would simply do both, as they do today.

What I do not understand is gaming apologists suggesting inflation is the reason for $70 games.

Sometimes the apologists make the argument that games have not kept pace with inflation for years. One apt example is how Final Fantasy 6 (or 3 at the time) on the SNES retailed for $79.99 back in 1994. That is literally $150 in 2021 money. Thing is… gaming was NOT mainstream back in 1994; the market was tiny, and dominated by Japan. When you are comparable in size to model train enthusiasts, you pay model train enthusiast prices.

Gaming has been mainstream for decades now. Despite ever-increasing budgets and marketing costs, games remain a high-margin product. FF6 may have sold for $150 in today’s dollars, but FF7 sold three times as many copies for the equivalent of $100 by 2003*. So how does an “inflation” argument make sense there?

“The costs for making games have increased!” I mean… yes, but also no? Developers like to pretend that they need bleeding-edge graphics in order to sell games, but that is clearly not the case everywhere. For one thing, indie developers have been killing it with some of the best titles this decade with pixel graphics and small-group passion projects. Stardew Valley sold how many copies? Remember when Minecraft sold for $2 billion? Not everyone is a big winner, but the costs of game making has only increased in specific genres with specific designs. Do we really need individually articulated and dynamically moving ass-hair on our protagonists?

And that’s where the “iT’s iNfLaTiOn” folks really lose me: who gives a shit about these corporations? I wrote about this 8 years ago:

As a consumer, you are not responsible for a company’s business model. It is perfectly fine to want the developers to be paid for their work, or to wish the company continued success. But presuming some sort of moral imperative on the part of the consumer is not only impossible, it’s also intellectually dishonest. You and I have no control over how a game company is run, how much they pay their staff, what business terms they ink, or how they run their company. Nobody asked EA to spend $300+ million on SWTOR. Nobody told Curt Schilling to run 38 Studios into the ground. Literally nobody wanted THQ to make the tablet that bankrupted the studio.

Why should we take it as a given that PlayStation 5 games cost more to develop? A lot of things in the economy actually get cheaper over time, regardless of inflation. Things like… computers and software. Personnel costs may usually only trend upwards, but again, someone else made the decision to assign 300 people to a specific game instead of 250. Or to scrap everything and start over halfway through the project. And somehow these companies continue making money hand over fist without $70 default pricing. So I find it far more likely that the price increase is a literal cash grab in the same way the airline industry added billions in miscellaneous fees after their bailouts and “forgot” to remove them after they recovered. Basically, because they could. Some informal industry collusion helps.

In summation: fuck the move towards legitimizing $70 MSRP. That 14% price hike is not going to result in 14% better games with 14% deeper stories and 14% more fun. In fact, it’s probably the opposite in that you will just afford 14% fewer games. And unless you got a 6% raise in 2021, you are already eating a pay cut on top of that.

Oh well. Waited this long for FF7R, so I may as well wait some more.

Priorities

The hardest thing is starting. The second hardest is continuing.

In the past few weeks, I have formulated zero long-term gaming memories. I have continued to throw myself into Guild Wars 2 and Hearthstone, making quite some “progress” in both. The time passes easily enough. And I am entertained during play. But I couldn’t tell you specifically what I was doing last Tuesday. I cannot present an argument for why you should (or shouldn’t) play GW2 or Hearthstone in a way that did not already exist a month ago.

Things happened, but nothing changed.

It is a tad early for resolutions, but here is mine: commit to distinct experiences. Any given MMO can consume thousands (or more) of hours of your time. It is indeed a great value, in comparison to how much money you would have had to spend on the equivalent games. Journey is what, 2-3 hours? And yet the experience of Journey remains a core memory eight years later. That music, the visuals, that nameless stranger who guided me to the summit. Would I have traded 100 Winterberries for that experience? It’s absurd, and yet I find myself doing that every day.

Prose aside, this desire came from a Reddit post talking about how there would be no Dark Souls without ICO. While I have not played Dark Souls much – despite owning several of them – I understood the sentiment because I played ICO. And yet how many people out there never did, or ever will? That game is a transformative experience. One that predated my first contact with MMOs. What if I… hadn’t? Too busy with WoW or whatever? Could there be an ICO in my unplayed gaming hoard right now?

Now, I’m not actually expecting to find another ICO in my library. And this sentiment is different than the sort of vague, “I should play everything just in case it’s genius.” I’m also still planning on squeezing in some MMO time in there too, assuming I’m not hooked on something else. But! Let’s take some baby steps towards the thing I actually want to do – generate unique experiences worth talking about – and not get sucked into killing time all the, er, time.

It’s silly, but here’s my starting list:

- Death Stranding

- Undertale

- SOMA

- To the Moon

- Legend of Heroes: Trails in the Sky

- Final Fantasy 15

Some are 100s of hours, some are less so, some aren’t going to be worth it. Final Fantasy 15, for example, gets shit on a lot. Let’s see why, eh? I’m getting better at dropping “good” games that have exhausted their novelty, like Dishonored 2 and Subnautica: Below Zero, so that shouldn’t be a factor.

I owe it to myself to give these games (and others) a chance. Especially since, you know, I already own them. I’m not going to find my next Xenogears just doing daily quests all the goddamn time.

Unsustainability

May 27

Posted by Azuriel

Senua Saga: Hellblade 2 recently came out to glowing reviews and… well, not so glowing concurrent player counts on Steam. Specifically, it peaked at about 4000 players, compared to 5600 for the original game back in 2017, and compared to ~6000 for Hi-Fi Rush and Redfall. The Reddit post where I found this information has the typical excuses, e.g. it’s all Game Pass’s fault (it was a Day 1 release):

Now, it’s worth pointing out that concurrent player counts is not precisely the best way to measure the relative success of a single-player game. Unless, I suppose, you are Baldur’s Gate 3. Also, Hellblade 2 is a story-based sequel to an artistic game that, as established, only hit a peak of 5600 concurrent players. According to Wikipedia, the original game sold about 1,000,000 copies by June 2018. Thus, one would likely presume that the sequel would sell roughly the same amount or less.

The thing that piqued my interest though, was the reply that came next:

I mean… sure. But there’s an unspoken assumption here that these small games with gigantic, 5-6 year budgets would be justified even without being on a subscription service. See hot take:

Gaming’s “sustainability problem” has long been forecast, but it does feel like things have more recently come to a head. It is easy to villainize Microsoft for closing down, say, the Hi-Fi Rush devs a year after soaking up their accolades… but good reviews don’t always equate to profit. Did the game even make back its production costs? Would it be fiduciarily responsible to make the bet in 2024, that Hi-Fi Rush 2 would outperform the original in 2030?

To be clear, I’m not in favor of Microsoft shutting down the studio. Nor do I want fewer of these kind of games. Games are commercial products, but that is not all they can be. Things like Journey can be transformative experiences, and we would all be worse off for them not existing.

Last post, I mentioned that Square Enix is shifting priorities of their entire company based on poor numbers for their mainline Final Fantasy PS5 timed-exclusive releases. But the fundamental problem is a bit deeper. At Square Enix, we’ve heard for years about how one of their games will sell millions of copies but still be considered “underperforming.” For example, the original Tomb Raider reboot sold 3.4 million copies in the first month, but the execs thought that made it a failure. Well, there was a recent Reddit thread about an ex-Square Enix executive explaining the thought process. In short:

That… makes sense. One might even say it’s basic economics.

However, that heuristic also seems outrageously unsustainable in of itself. Almost by definition, very few companies beat “the market.” Especially when the market is, by weight, Microsoft (7.16%), Apple (6.12%), Nvidia (5.79%), Amazon (3.74%), and Meta (2.31%). And 495 other companies, of course. As an investor, sure, why pick a videogame stock over SPY if the latter has the better return? But how exactly does one run a company this way?

Out of curiosity, I found a site to compare some game stocks vs SPY over the last 10 years:

I’ll be goddamned. They do usually beat the market. In case something happens to the picture:

And it’s worth pointing out that Square Enix was beating the market in August 2023 before a big decline, followed by the even worse decline that we talked about recently. Indeed, every game company in this comparison was beating SPY, before Ubisoft started declining in 2022. Probably why they finally got around to “breaking the glass” when it comes to Assassin’s Creed: Japan.

Huh. This was not the direction I thought this post was going as I was writing it.

Fundamentally, I suppose the question remains as to how sustainable the videogame market is. The ex-Square Enix executive Reddit post I linked earlier has a lot more things to say on the topic, actually, and I absolutely recommend reading through it. One of the biggest takeaways is that major studios are struggling to adjust to the new reality that F2P juggernauts like Fortnite and Genshin Impact (etc) exist. Before, they could throw some more production value and/or marketing into their games and be relatively certain to achieve a certain amount of sales as long as a competitor wasn’t also releasing a major game the same month. Now, they have to worry about that and the fact that Fortnite and Genshin are still siphoning up both money and gamer time.

Which… feels kind of obvious when you write it out loud. There was never a time when I played fewer other games than when I was the in the throes of WoW (or MMOs in general). And while MMOs are niche, things like Fortnite no longer are. So not only do they have to beat out similar titles, they have to beat out a F2P title that gets huge updates every 6 weeks and has been refined to a razor edge over almost a decade. Sorta like how Rift or Warhammer or other MMOs had to debut into WoW’s shadow.

So, is gaming – or even AAA specifically – really unsustainable? Possibly.

What I think is unsustainable are production times. I have thought about this for a while, but it’s wild hearing about some of the sausage-making reporting on game development. My go-to example is always Mass Effect: Andromeda. The game spent five years in development, but it was pretty much stitched together in 18 months, and not just because of crunch. Perhaps it is unreasonable to assume the “spaghetti against the wall” phase of development can be shortened or removed, or I am not appreciating the iteration necessary to get gameplay just right. But the Production Time lever is the only one these companies can realistically pull – raising prices just makes the F2P juggernaut comparisons worse, gamer ire notwithstanding. And are any of these games even worth $80, $90, $100 in the first place?

Perversely, even if Square Enix and others were able to achieve shorter production times, that means they will be pumping out more games (assuming they don’t fire thousands of devs). Which means more competition, more overlap, and still facing down the Fortnite gun. Pivoting to live service games to more directly counter Fortnite doesn’t seem to be working either; none of us seem to want that.

I suppose we will have to see how this plays out over time. The game industry at large is clearly profitable and growing besides. We will also probably have the AAA spectacles of Call of Duty and the like that can easily justify the production values. Similarly, the indie scene will likely always be popping, as small team/solo devs shoot their shot in a crowded market, while keeping their day jobs to get by.

But the artistic AA games? Those may be in trouble. The only path for viability I see there is, ironically, something like Game Pass. Microsoft is closing (now internal) studios, yes, but it’s clearly supporting a lot of smaller titles from independent teams and giving them visibility they may not otherwise have achieved. And Game Pass needs these sort of games to pad out the catalog in-between major releases. There are conflicting stories about whether the Faustian Game Pass Bargain is worth it, but I imagine most of that is based on a post-hoc analysis of popularity. Curation and signal-boosting is only going to become increasingly required to succeed for medium-sized studios.

Posted in Commentary, Philosophy

Comments Off on Unsustainability

Tags: Armchair Game Development, Microsoft, Square Enix, Unsustainable, Xbox Game Pass